Installing Avi Vantage for VMware vCenter

Overview

This guide explains how to integrate Avi Vantage into a VMware vCenter cloud. A single Avi Controller cluster supports multiple concurrent vCenter clouds.

Avi Vantage is a software-based solution that provides real-time analytics and elastic application delivery services. Avi Vantage optimizes core web functions, including SSL termination and load balancing.

Note: Starting with NSX Advanced Load Balancer version 21.1.6, network objec ts in NSX Advanced Load Balancer now sync with the name of the associated port group in vCenter. Previously, changing name of the port group and name of the network in NSX Advanced Load Balancer was independent of each other.

Points to Consider

- Write access is the recommended deployment mode. It is the quickest and easiest way to deploy and offers the highest levels of automation between Avi Vantage and vCenter.

- After completing the deployment process, click here for more information on creating virtual services.

- Avi Vantage can be deployed with a VMware cloud in either no access, or write access mode. Each mode is associated with different functionality and automation, and also requires different levels of privileges for Avi Controller within VMware vCenter. For complete information, refer to Avi Vantage Interaction with vCenter.

- The Avi Vantage administrator needs to download only one Service Engine image for each type of image needed (

ova/qcow2/docker). The same Service Engine image can be then used to deploy Service Engines in any tenant and cloud configured in the system. For more information, refer to Manually Deploy Service Engines in Non-Default Tenant/Cloud. - It is recommended to use the built-in Virtual Service Migration functionality.

Integrating Avi Vantage with vCenter

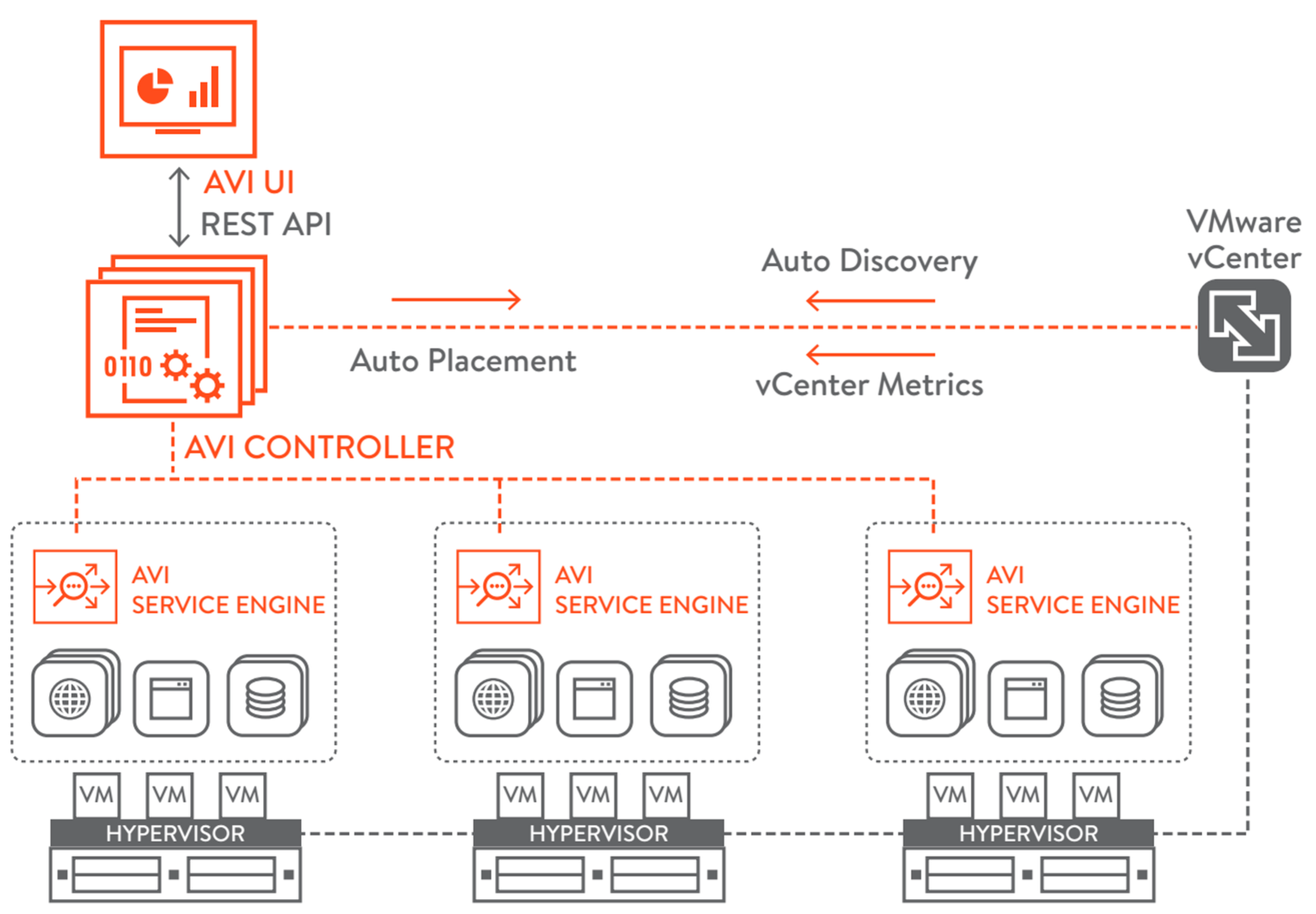

Avi Vantage runs on virtual machines (VMs) managed by VMware vCenter. When deployed into a vCenter-managed VMware cloud, Avi Vantage performs as a fully distributed, virtualized system consisting of the Avi Controller and Avi Service Engines each running as a VM.

The Avi Vantage Platform is built on software-defined architectural principles which separate the data plane and control plane. The product components include:

- Avi Controller (control plane) The Avi Controller stores and manages all policies related to services and management. Through vCenter, the Avi Controller discovers VMs, data centers, networks, and hosts. Based on this auto-discovered information, virtual services can quickly be added using the web interface. To deploy a virtual service, the Avi Controller automatically selects an ESX server, spins up an Avi SE (described below), and connects it to the correct networks (port groups).

Note: Avi Controllers need access to the desired ESXi hosts (over port 443) to allow the Avi Controller-to-vCenter communication.

The Avi Controller can be deployed as a single VM or as a high availability cluster of 3 Avi Controller instances, each running on a separate VM.

- Avi Service Engines (data plane) Each Avi Service Engine runs on its own virtual machine. The Avi SEs provide the application delivery services to end-user traffic, and also collect real-time end-to-end metrics for traffic between end-users and applications.

vCenter Integration Enhancements in 21.1.6

The Avi vCenter integration has been enhanced in 21.1.6 to,

- Support better performance for vCenter environments

- Utilize the Content Library for storing the Service Engine OVA image

In addition, vCenter Read Access mode is no longer supported. Refer to Considerations for vCenter Cloud when Upgrading from Prior Releases to 22.1.1

Deployment Prerequisites

Virtual Machine Requirements

Refer to the Hardware Requirements document for the minimum hardware requirements required to install Avi Controller and Service Engines.

Avi Controller can also be deployed as a three-node cluster for redundancy. A separate VM is required for each of the three Avi Controller nodes. However, the requirements for each VM would remain the same. Refer to Overview of Avi Vantage High Availability for more information on High Availability. Ensure that the ESX host has the required physical resources. Service Engine creation will fail in the absence of these resources.

Note:

For optimal performance, Avi recommends that the Controller VM vCPU and Memory be reserved in vCenter.

Service Engine VM requirements

The following are the Service Engine VM requirements:

| Requirement | Description |

|---|---|

| RAM | Add 1 GB of RAM to the SE configuration for each additional vCPU |

| CPU socket affinity | Select this option for SEs within their group to allocate vCPU core to the same CPU socket as that of the multi-socket CPU |

| Dedicated dispatcher CPU | Select this option for SEs within their group to dedicate a single CPU thread to dispatch data flows to other vCPU threads. This is relevant for SEs with three or more CPUs. |

| Disk | Set the disk value to a minimum of (2*RAM_size) + 5 GB to ensure atleast 15GB. |

For more details on the Service Engine VM requirements, refer to Service Engine Capacity and Limit Settings.

Note:

For optimal performance, Avi recommends that the Service Engine VM vCPU and Memory be reserved in vCenter.

Software Requirements

For further details on system requirements, refer to Ecosystem Support guide.

The Avi Controller OVA contains the images files for the Avi Controller and Avi SEs.

VMware vCenter credentials are required for write access mode deployment.

Note: In a single Controller cluster, if you need to have both vCenter and NSX-T cloud types, then it is recommended to have a dedicated content library for vCenter and NSX-T clouds respectively.

IP Address Requirements

The Avi Controller requires one management IP address. Administrative commands are configured on the Controller by accessing it using this IP address. The management IP address is also used by the Controller to communicate with other Service Engines. This IP address for all Controllers within a cluster should belong to the same subnet. For more information, refer to the Controller Cluster IP document.

Each Avi Service Engine require one management IP address, a virtual service IP address, and an IP address that faces the pool network.

For quick deployments, DHCP is recommended over static assignment for Avi SE management and the pool network IP address allocation.

Note: Use a static IP for Avi Controller management address, unless your DHCP server can preserve the assigned IP address permanently.

The virtual service IP address is provided as input while creating the load balancing application. You can automate the virtual service IP address allocation by integrating it with an IPAM service. For more information, refer to IPAM and DNS Support.

Avi Vantage load balances the traffic with VIP address:port as its destination across the members (servers) within the pool.

Changing vCenter IP in existing vCenter Cloud

Note: You can change the Vcenter IP by setting the Cloud to No access.

The following are the steps to change vCenter IP in existing vCenter cloud on NSX Advanced Load Balancer:

- Disable the virtual services. When you disable the virtual service, the SEs are getting idle. Hence, you need to delete the same before the Cloud is set to No access.

- Delete the old vCenter configuration in the Cloud, by setting the Cloud to No Access from GUI.

- Configure the new vCenter in the same Cloud.

- Modify the SE group/ Network objects to the new Cloud.

- Enable the virtual service.

Note: If virtual service/ pool has placement networks then you need to point it to the new network object. You can check this in the virtual service or from avi_config file in show techsupport.

If virtual service/ Pool has placement networks then you need to go to each virtual service/ pool and change it after the new cloud comes up.

vCenter Account Requirements

During the initial Controller setup, a vCenter account must be entered to allow communication between the Controller and the vCenter. The vCenter account must have the privileges to create new folders in the vCenter. This is required for Service Engine creation, which then allows virtual service placement.

For complete information on VMware user role and privileges, refer to VMware User Role for Avi Vantage.

Modes of Deployment

Depending on the level of vCenter access provided, Avi Vantage can be deployed in a VMware cloud in the following modes:

- Write access mode – This mode requires a vCenter user account with write privileges. Avi Controller automatically spins up Avi Service Engines as needed, and accesses vCenter to discover information about the networks and VMs.

- No access mode – Avi Controller does not access vCenter. The Avi Vantage and vCenter administrator manually deploy Avi Service Engines, define networks and interface IP addresses, and map the Service Engines to the correct networks.

Note: IPv6 is supported for VMware vCenter in Avi Vantage.

Configuring IP address pools

Note: This section is applicable only for static IP address allocation.

Each Avi SE deployed in a VMware cloud has 10 vNICs. The first vNIC is the management vNIC using which the Avi SE communicates with the Avi Controller. The other vNICs are data vNICs and are used for end-user traffic.

After spinning up an Avi SE, the Avi Controller connects the Avi SE’s management vNIC to the management network specified during initial configuration. The Avi Controller then connects the data vNICs to virtual service networks according to the IP and pool configuration of the virtual services.

The Avi Controller builds a table that maps port groups to IP subnets. With this table, the Avi Controller connects Avi SE data vNICs to port groups that match virtual service networks and pools.

After a data vNIC is connected to a port group, it needs to be assigned an IP address. For static allocation, assign a range of IP addresses to the applicable port group. The Avi Controller selects an IP address from the specified range and adds the address to the data vNIC connected to the port group.

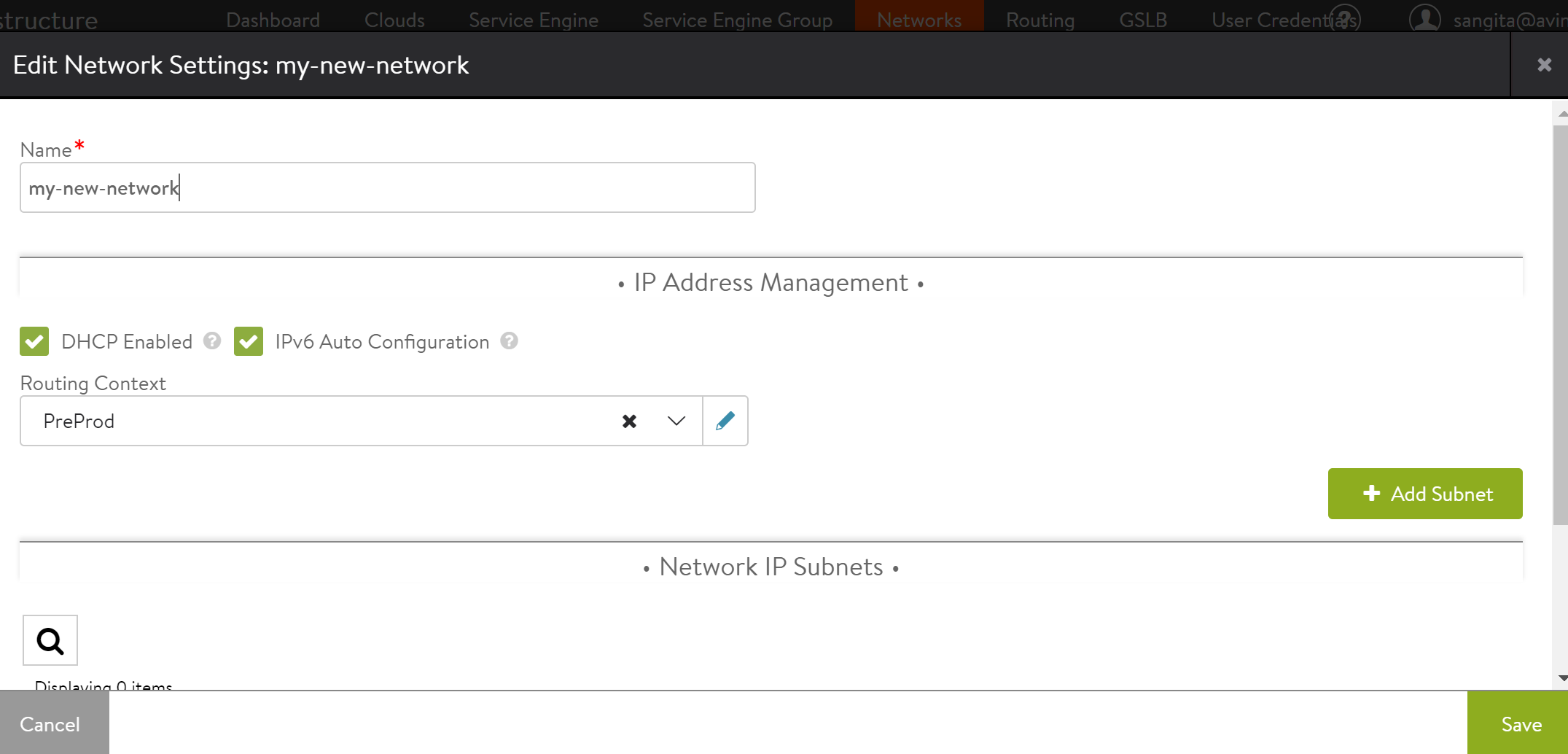

Configure IP address pools for networks hosting Avi Service Engines by following the steps mentioned below:

-

Navigate to Infrastructure > Clouds > Default-Cloud. Click on edit icon. Select Network tab in Default Cloud window.

- Find a port group and IP subnet on which the DHCP service is not available.

- Select the port group by clicking on the edit icon.

- Select Static under Network IP Address Management.

- Select the IP Subnet by clicking on the edit icon.

- Enter the static IP address or the range of IP addresses.

Considerations for vCenter Cloud while upgrading from prior releases to 21.1.6

- vCenter Read Access mode is not supported starting with NSX Advanced Load Balancer 21.1.6. The deprecation was announced in 21.1.3 Release Notes. Any upgrade to 21.1.6 with vCenter Read Access mode cloud configuration will fail and get rolled back.

- The Service Engine management network (Port-group) needs to be provisioned with required free ports as per the planned number of Service Engines multiplied by 10. For instance, if the administrator plans to deploy 50 Service Engines, then the management network/ port-group allocated from Avi Service Engine should have at least 500 unused ports (50 Service Engines * vNICs per Service Engine).

Note: Avi Internal Port group is not used starting with NSX Advanced Load Balancer 21.1.6. The upgraded Service Engines from prior release will retain the vNICs attached to Avi Internal Port-group but any vNIC updated later will start using the Service Engine management network/ port-group.

- Starting with NSX Advanced Load Balancer 21.1.6, the vCenter cloud configuration has a new option

use_content_libto utilize the content library for storing the Service Engine ova instead of storing on respective ESXi host. Post upgrade to 21.1.6 version, for new Service Engine deployment, you can configure the content library as part of vCenter cloud configuration. Once this option is configured, it cannot be disabled.

- Starting with NSX Advanced Load Balancer 21.1.6, the existing vCenter APIs and CLIs are modified. Refer to vCenter Cloud API CLI guide.

Recommended Reading

- Virtual Service Creation

- Troubleshooting Avi Vantage Deployment into VMware

- Upgrading Avi Vantage Software

- Upgrades in an Avi GSLB Environment

Additional Information

-

Verifying Username and Password for VMware vCenter from the Avi Controller

-

How to Enable VLAN Trunking on an Avi Service Engine Running on ESX

Document Revision History

| Date | Change Summary |

|---|---|

| November 9, 2022 | Revamped the entire KB, along with removing Read Access details for 21.1.6 |