Installing AKO on TKGI

Overview

AKO supports LoadBalancer service and ingress for Tanzu Kubernetes Grid Integrated (TKGI) clusters. This document discusses the steps required to install AKO on TKGI cluster.

A TKGI cluster can be created on NSX-T managed overlay networking with NCP as CNI or on regular VMware vSphere Distributed Switch (VDS) networking with Flannel as Container Network Interface (CNI).

Since the Controller integrates with infrastructure orchestrator (vCenter or NSX-T), the way the NSX Advance Load Balancer is configured for these networking options is different.

TKGI with NCP on NSX-T Overlays

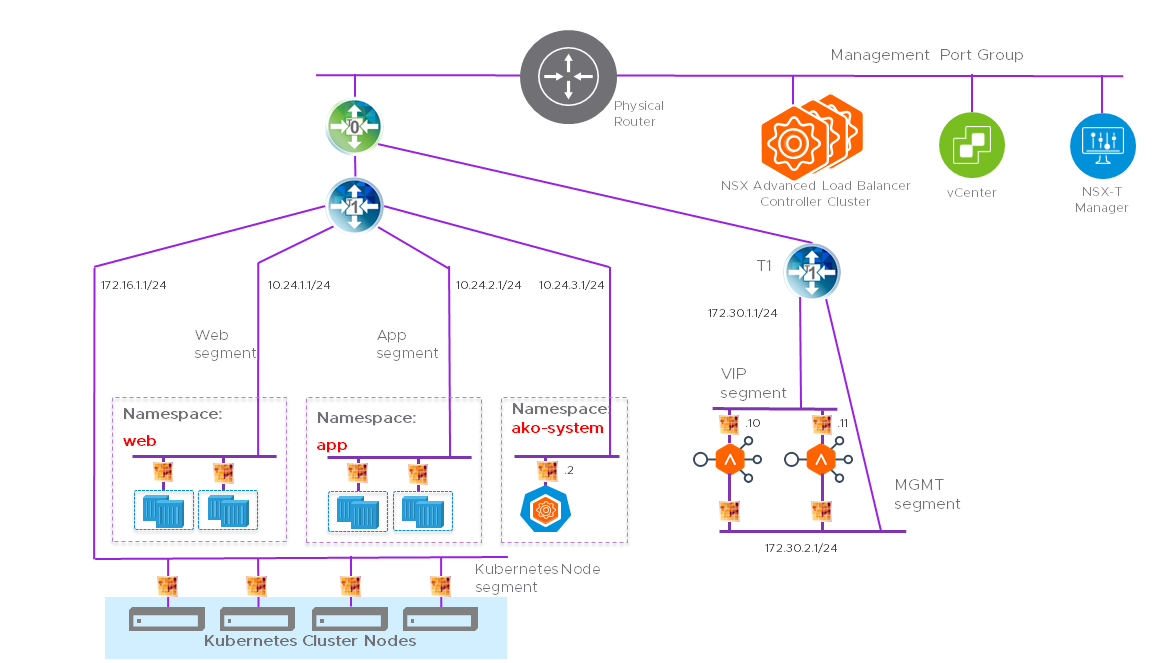

The NSX Advanced Load Balancer deployment in NSX-T environment is as shown below:

The design of this deployment is as follows:

The Controller

The NSX Advanced Load Balancer Controller cluster is deployed as a three-node cluster, connected to the management port group.

NSX-T Networking

Create a Tier-1 gateway for the NSX Advanced Load Balancer using the NSX-T manager UI.

Create two overlay segments connected to Tier-1, one for SE management traffic and one for the VIP/ data traffic.

The VIP network must have route-able subnet for the VIPs to be accessible form external client. The management segment can also be routable or outbound NAT can be configured on T1 to allow SEs connect to the Controller. Required ports must be allowed on DFW and Edge firewall , as explained in the Protocol Ports Used by Avi Vantage for Management Communication article.

The Service Engines (SEs)

The Service Engine virtual machines load balance the workload traffic. The vnic0 of the SE VM connects to the management segment while the vnic1 connects to the VIP segment.

Leave the remaining interfaces on the VM disconnected.

In case of a vCenter cloud, this is automatic. However, in case of a No Orchestrator - cloud, this is done manually.

AKO

Install AKO on the TKGI cluster in a namespace called avi-system.

Ensure AKO can reach the NSX Advanced Load Balancer Controller IP address to run the NSX Advanced Load Balancer APIs.

If the setup has multiple TKGI clusters, each cluster needs AKO installed on it, but all AKOs can share the same Controller and SE group.

Cloud Configuration

The cloud configuration on the Controller allows it to integrate with the IaaS platform and automate the provisioning and lifecycle of the SEs. The NSX Advanced Load Balancer supports NSX-T cloud but this is not compatible with TKGI environment because, the NSX Advanced Load Balancer uses the newer NSX-T policy APIs while TKGI uses the manager APIs.

You can configure vCenter cloud which can also automate the SE lifecycle. But this is supported only if NSX created switch is of CVDS type. If the NSX switch is NVDS, use a No Orchestrator cloud manually deploy the SEs.

Deploying the NSX Advanced Load Balancer

-

Download the Controller OVA from VMware CUSTOMER CONNECT software downloads page.

-

Deploy the virtual machines as discussed in Installing Avi Vantage for VMware vCenter.

-

If you are using a vCenter write access cloud, configure the Controller. If you are using a No Orchestrator cloud, deploy the Service Engines.

-

After deploying the SEs, connect the interface 1 to the management segment and interface 2 to the VIP segment as shown in the diagram.

Configuration

Configure TKGI

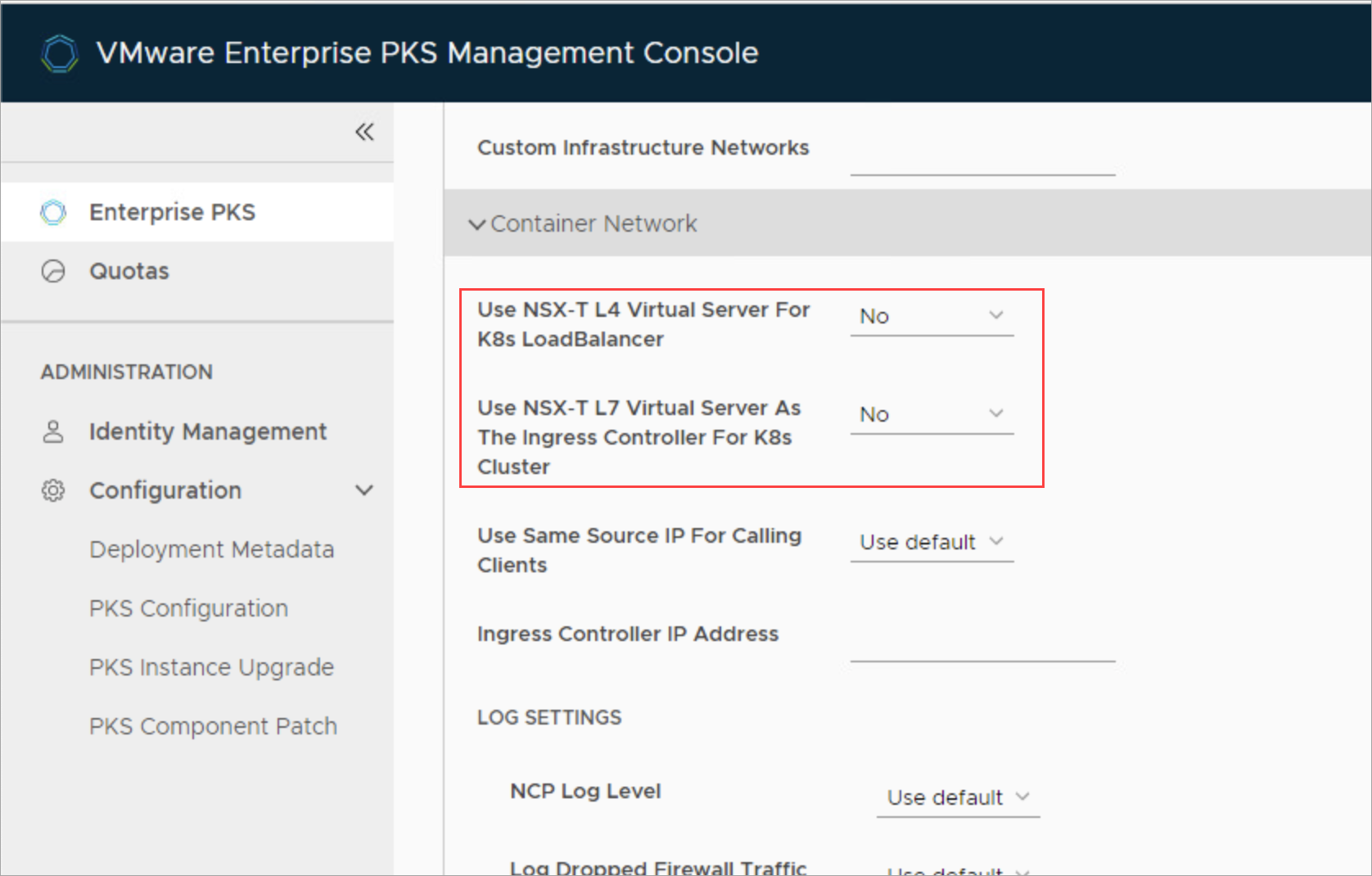

From the VMware Enterprise PKS Management Console, disable the NSX Advanced Load Balancer for the cluster by setting the options Use NSX-T L4 Virtual Server For K8s Load Balancer and Use NSX-T L7 Virtual Server As The Ingress Controller For K8s Cluster to No.

These services will be synced by AKO running on the cluster.

Note: AKO only supports LoadBalancer and Ingress for applications created on the TKGI cluster. The Kubernetes API endpoint is managed by NSX-T load balancer as it is directly configured by the BOSH automation.

Configure Avi IPAM and DNS

Configure the IPAM and DNS profiles as shown here and add the profiles to the cloud configuration.

Configure AKO

Use Helm to install AKO on the TKGI cluster as shown here.

Document Revision History

| Date | Change Summary |

|---|---|

| October 08, 2021 | Published the article for Installing AKO on TKGI |